AI Agent Harnesses Infrastructure-Centric AI Development

Why in 2026 will it not be the AI model, but the agent harness (infrastructure, memory, and tooling) that determines whether your AI agents truly operate reliably?

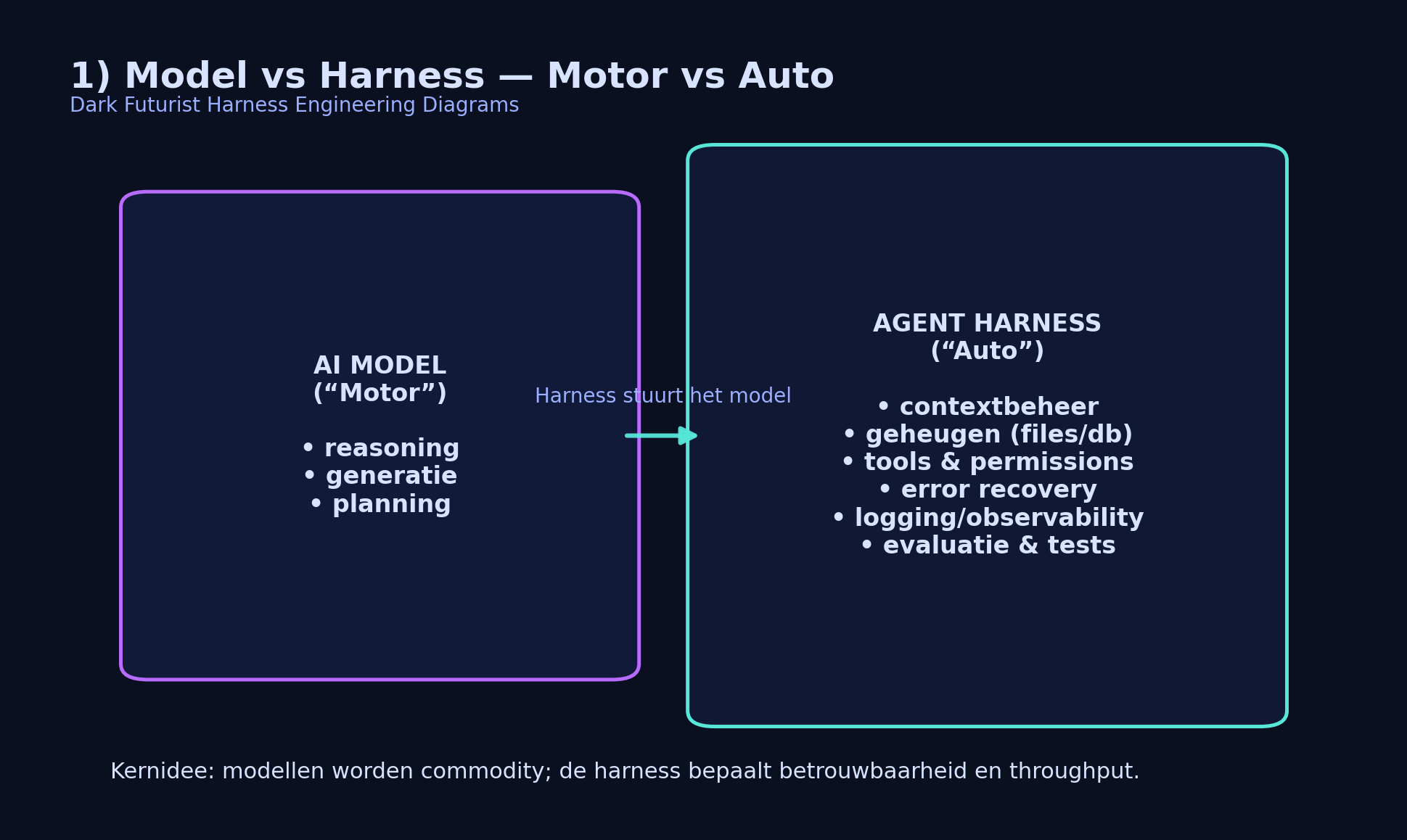

In recent years, almost every AI discussion revolved around one question: which model is the best? GPT-4, Claude, Gemini — faster, smarter, larger context window. But while everyone was looking at the engine, something else turned out to be decisive in practice.

It’s not the engine that determines if you win the race. The car does.

In 2026, the focus will shift from the model itself to the surrounding infrastructure: the agent harness. That sounds technical, but the idea is actually surprisingly logical. In this article, I will guide you step by step — first understandably, then technically — so that you not only know what it is, but also why it becomes so important.

Model vs Harness — The Car Analogy

Imagine an extremely powerful engine. 800 horsepower. Perfectly tuned. But you set that engine on the ground without a chassis, without a steering wheel, without brakes, and without a dashboard.

What do you have then? An impressive piece of technology… that you can’t do anything with.

That’s exactly what an AI model is without good infrastructure. The model can reason, generate text, make plans. But it cannot operate independently:

- Keep track of what it did yesterday

- Decide what should be retained

- Systematically correct mistakes

- Remain consistent over multiple sessions

- Handle tools and external systems safely

That’s where the harness comes into play.

The harness is the complete car: steering, brakes, navigation, fuel management, maintenance log, and safety systems. The model is the engine — powerful, but useless without control.

The harness is the complete car: steering, brakes, navigation, fuel management, maintenance log, and safety systems. The model is the engine — powerful, but useless without control.

What Does a Harness Do Specifically?

An agent harness is essentially the control layer that determines how the model behaves in the real world. It usually consists of several components:

- Context Management

- What do you provide the model with and what not? Stuffing everything into the prompt may seem convenient, but often leads to noise and confusion.

- External Memory

- Instead of keeping everything in the context window, use files or a database as long-term memory.

- Tool Orchestration

- Which tools can the agent use? What do the interfaces look like? What happens in case of a failed call?

- Error Recovery

- What if a test fails? What if an API times out? A good harness catches that and adjusts.

- Logging & Observability

- Without logs, you have no idea why an agent fails. A harness ensures that everything remains traceable.

Think of it as the difference between a smart intern without guidance and the same intern in a well-organized company with clear processes.

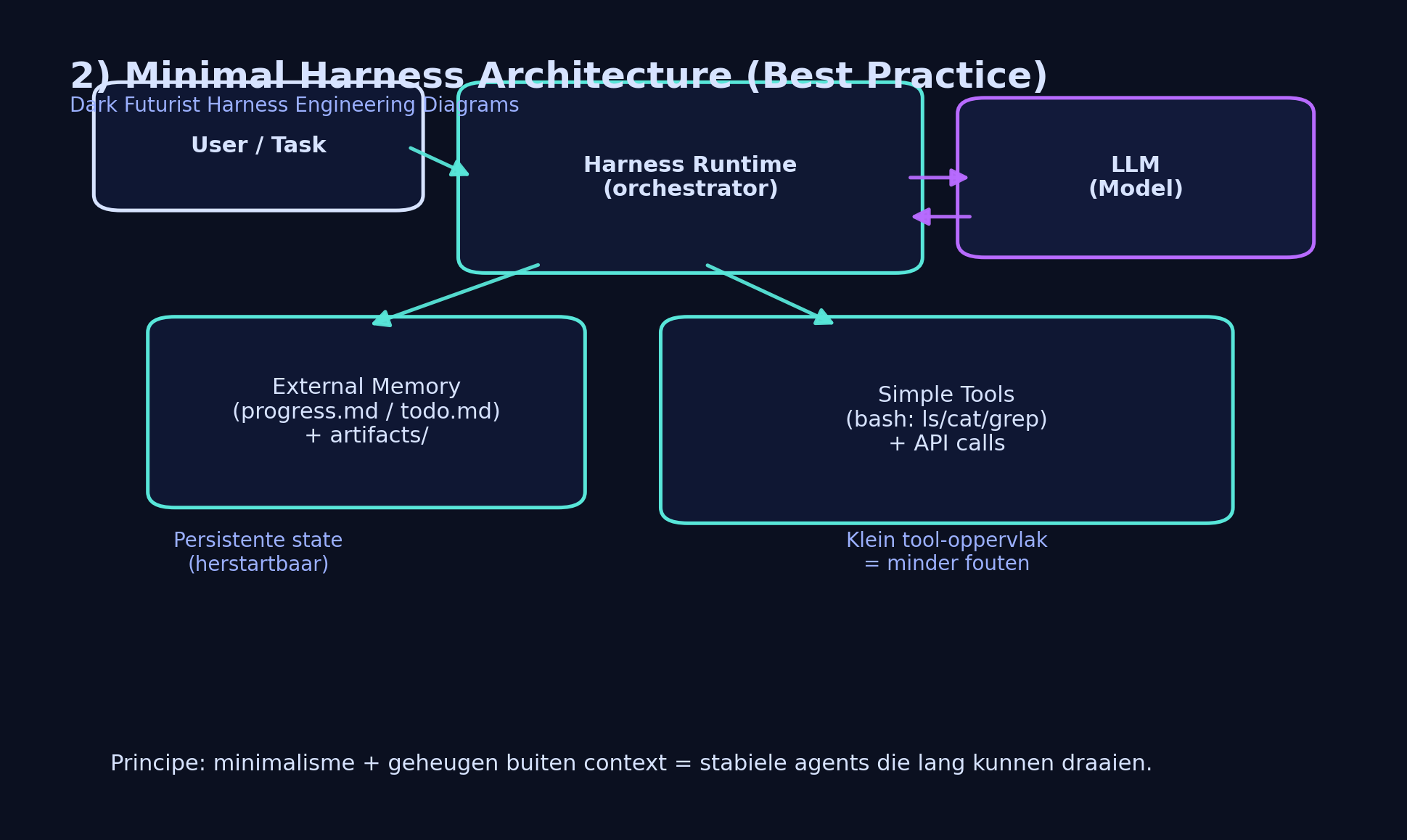

Minimalist Architecture (Best Practice)

An interesting development is that more powerful models often perform better in a simpler environment.

That sounds contradictory. You would think: the more tools, the better. But in practice, many teams see the opposite happen.

When an agent has access to dozens of specialized tools, the model first has to choose which tool is suitable, then correctly fill in parameters, and then handle error messages. Each step is an extra chance for misinterpretation.

When an agent has access to dozens of specialized tools, the model first has to choose which tool is suitable, then correctly fill in parameters, and then handle error messages. Each step is an extra chance for misinterpretation.

By limiting the toolset to simple, predictable tools — such as standard bash commands like ls, cat, and grep — behavior often becomes more consistent.

Less choice stress. Less noise. More stability.

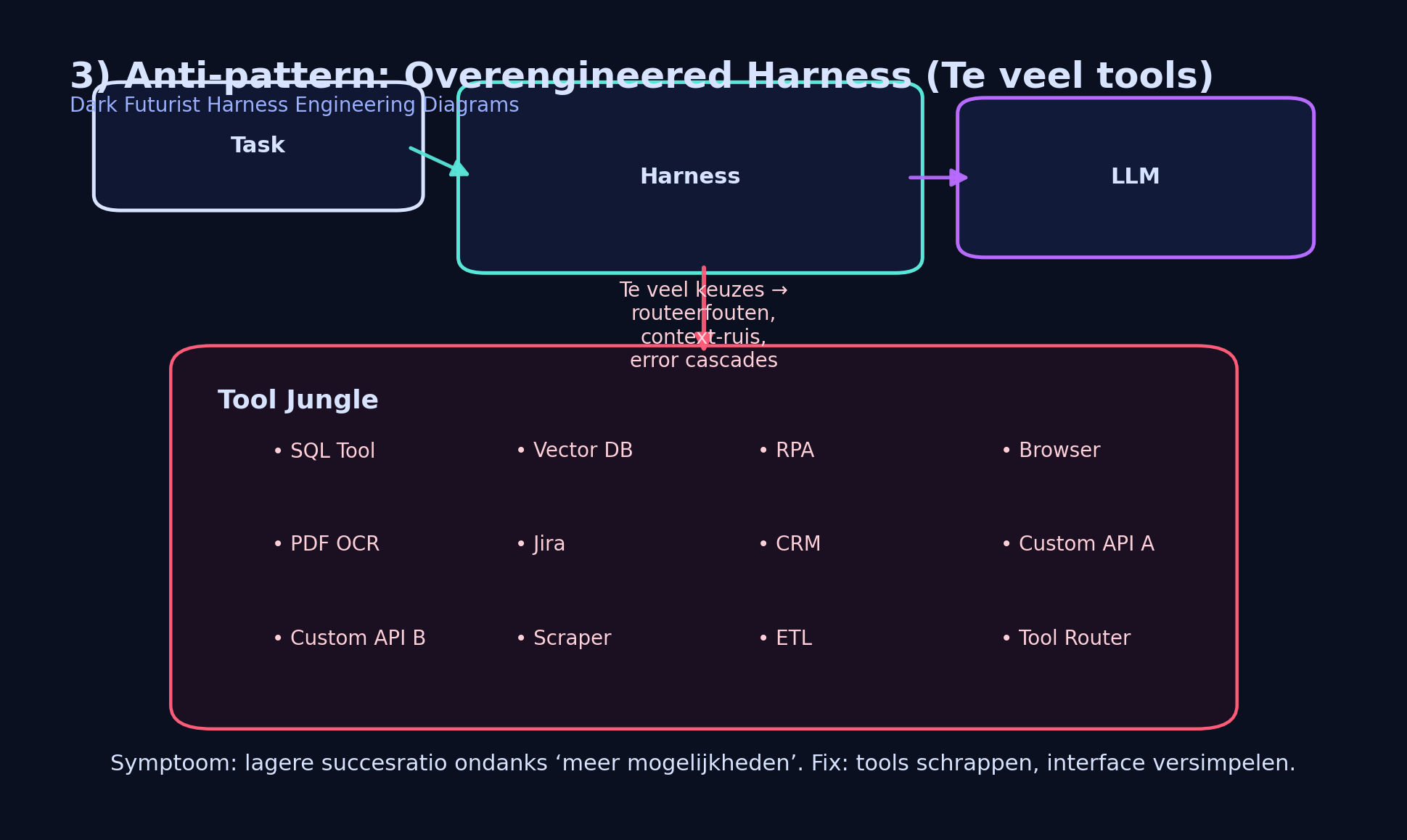

The Anti-Pattern: Too Many Tools

Overengineering is tempting. You build routers, special API wrappers, complex schemas, evaluation modules. It feels professional. But for a language model, this can become a maze.

What happens then:

What happens then:

- The agent chooses the wrong tool.

- The output is misinterpreted.

- The next step builds on an error.

- Errors accumulate.

More options do not automatically mean better performance. Sometimes the opposite is true.

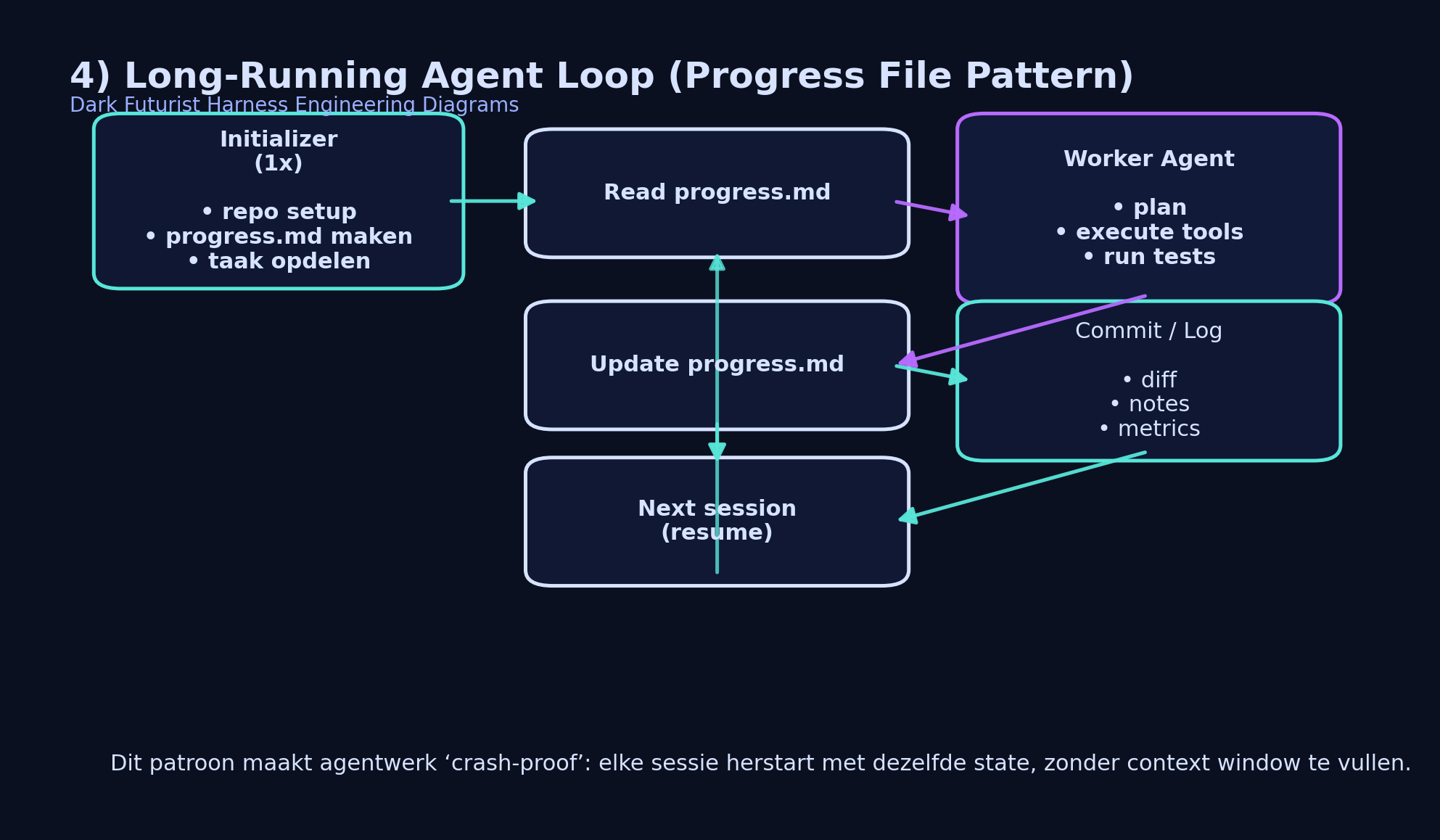

Long-Running Agents & Progress Files

One of the biggest challenges with AI agents is memory. Models have a limited context window. If you stuff everything in there, noise arises. Moreover, the model “forgets” anything outside the current session.

An elegant solution is to use a simple progress file — for example, progress.md.

The procedure is simple:

The procedure is simple:

- The agent reads the progress file at each start.

- Performs one concrete task.

- Updates the file with the new status.

This creates long-term memory outside the model itself. The agent can work for days or weeks without cramming everything into the context window.

Example Progress File Implementation

# Read progress

with open("progress.md", "r") as f:

state = f.read()

response = call_model(task, state)

with open("progress.md", "w") as f:

f.write(response.updated_state)

It's almost shocking how effective this simple technique can be.

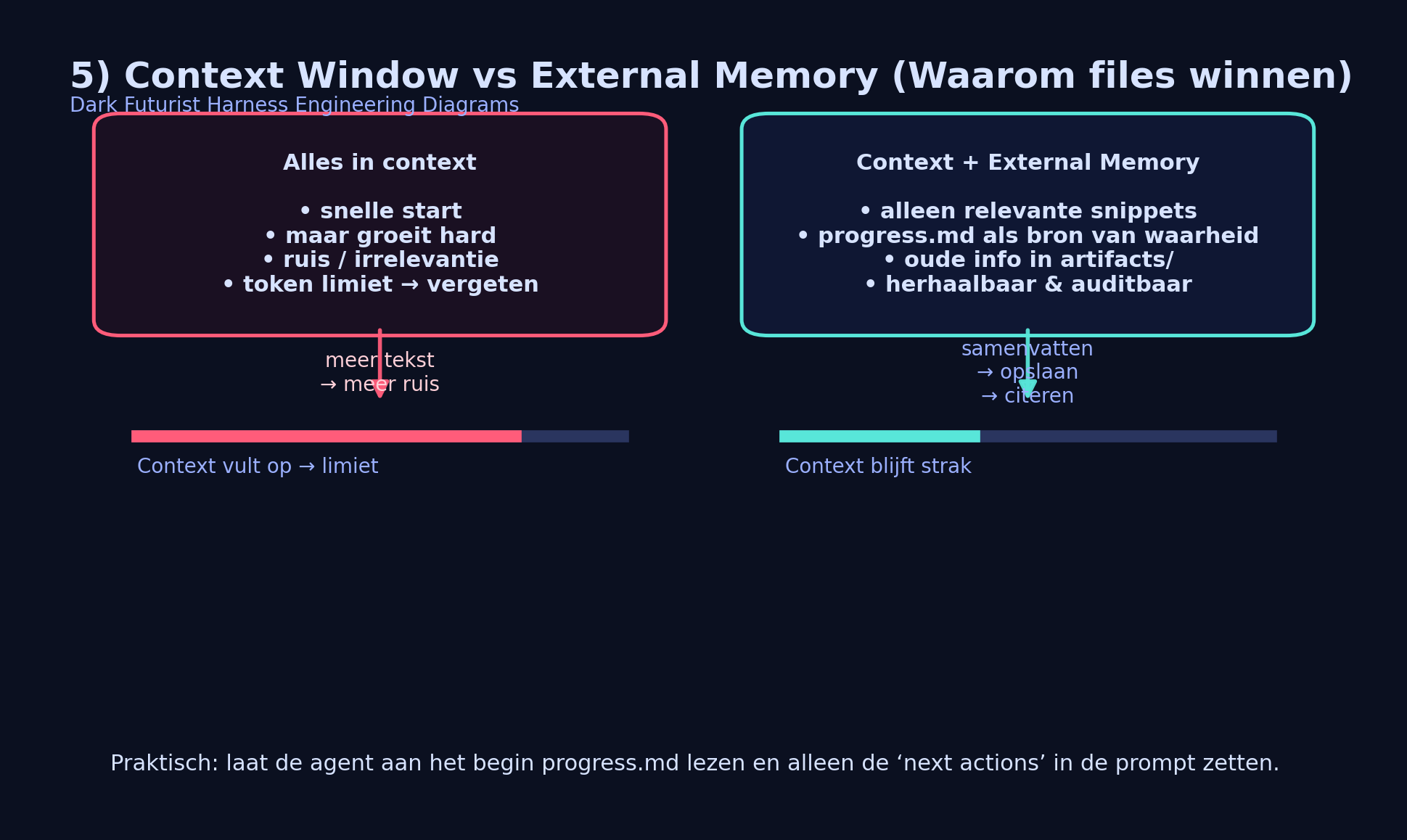

Context Window vs External Memory

Large context windows are impressive, but they don't solve everything. The more text you add, the greater the chance of irrelevance and confusion.

External memory — in files, a repo, or a database — allows you to inject only the relevant pieces into the prompt. The rest remains safely stored outside the model.

External memory — in files, a repo, or a database — allows you to inject only the relevant pieces into the prompt. The rest remains safely stored outside the model.

This makes agents more stable, scalable, and better controllable.

The Bitter Lesson (Richard Sutton)

General, scalable methods ultimately win over human clever tricks.

Richard Sutton wrote this in his famous essay The Bitter Lesson. His point: systems that can scale with computational power and repetition win in the long term over manually designed heuristics.

Applied to agents, this means:

- Not building increasingly complex prompts.

- Not adding more and more rules.

- But designing infrastructure that is repeatable and scalable.

A good harness lets the model think — but systematically forces it to remain consistent, controllable, and safe.

Conclusion

In 2026, it won't be the model with the highest benchmark score that wins. The winner will be the one who builds the best infrastructure.

Harness engineering thus becomes a core skill for AI developers. Not because the model becomes unimportant — but because the model truly comes into its own within a well-designed system.

The engine is becoming increasingly powerful.

But the one who builds the best car wins the race.